|

“We’re trying something very wild and audacious, and we’re hopeful it works out,” Chowdhury said. A coding mindset can be helpful in figuring out how to trick these AI models into slipping up, but the best exploits are done through natural language. The skillset of a large language model “red teamer” is completely different from that of the traditional hacker set, which focuses on bugs and errors in code that can be exploited. “But we are creating an environment where it is a smart idea to be doing something about these harms.” “I wouldn’t necessarily take it on faith” that the companies will fix every problem that emerges, said Chowdhury, an AI ethics and auditing expert. It’s ultimately on them to fix the problems, and a report due to come out next February will include whether they did so. The companies, as well as independent researchers, will receive the results of the competition as a massive database, which will detail the various issues found in the models.

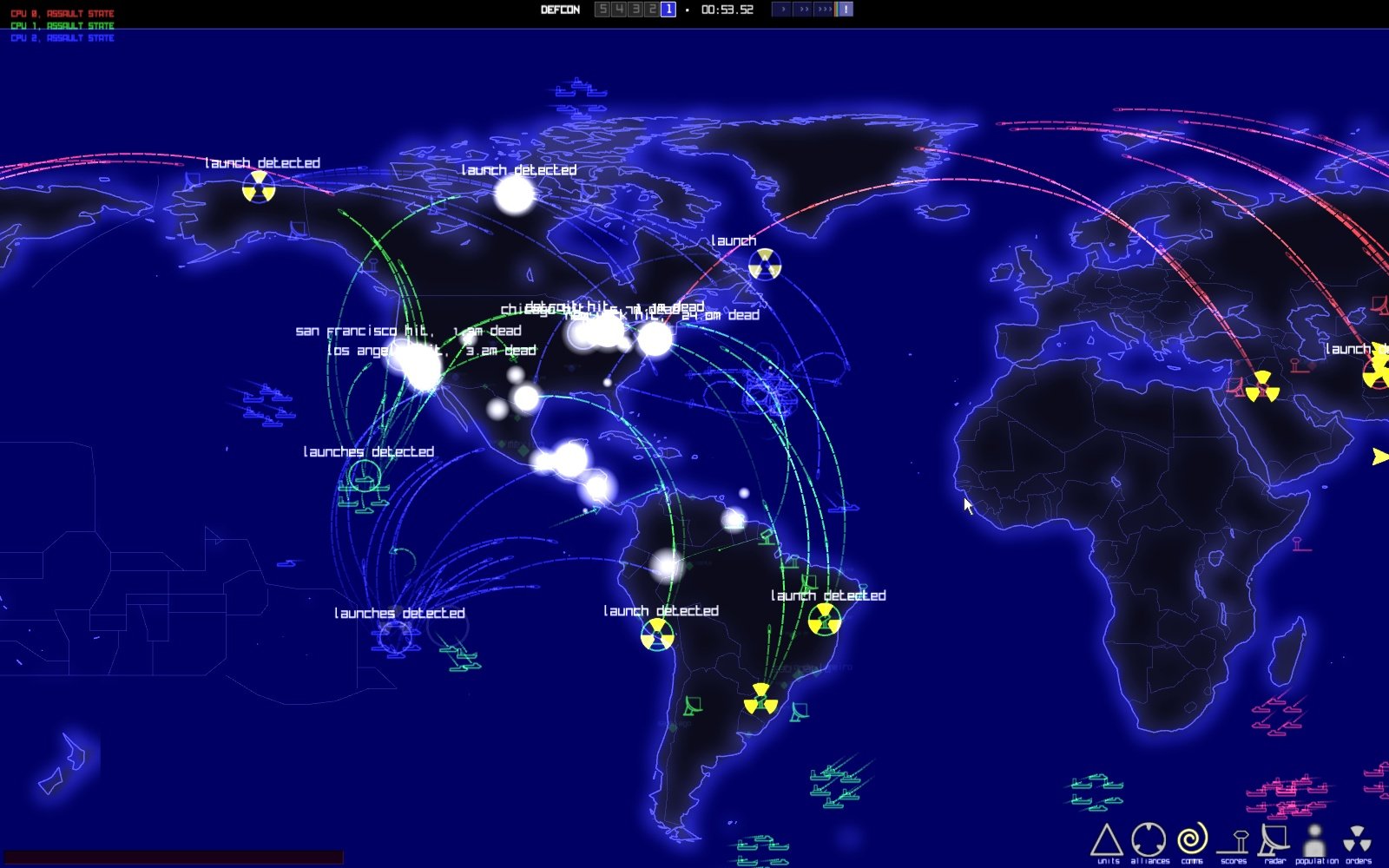

What matters more is what happens after this weekend. Plus, the White House is keeping an eye on it. And it makes sense why the companies would want to play ball: They aren’t paying to participate in this weekend’s challenge, organizer Rumman Chowdhury said, so they’re essentially getting a mass volume of testing and research for free. The scale and transparency of this exercise, and the participation of so many creators of large language models like ChatGPT, is notable. Winners will get a coveted Nvidia graphics card, along with bragging rights. uses to determine nuclear readiness and whether to launch nukes. But it’s never been done on AI models so publicly at this scale. DEFCON is a five-level scale of alert status that the U.S. These “red teaming” exercises - in which hackers try to find errors that a bad actor could take advantage of - aren’t new in tech or cybersecurity. Competitors will also try to expose more subtle biases, like whether a model provides different answers to similar questions about Black and white engineers, for example. That could include coaxing a chatbot to spit out political misinformation or an incorrect math answer. Participants will get points for successfully completing tasks of varying difficulty. The White House, which secured commitments from the companies to open themselves up to external testing, also helped craft the challenges. Over about 20 hours at the DEF CON conference in Las Vegas starting on Friday, an estimated 3,200 hackers will try their hand at tricking chatbots and image generators, in the hopes of exposing vulnerabilities.Įight companies are putting their models to the test: Anthropic, Cohere, Google, Hugging Face, Meta, Nvidia, OpenAI, and Stability AI. Study with Quizlet and memorize flashcards containing terms like What does Threatcon scale handle, What does the DEFCON scale determine, What are FPCONS and more. He is also a tenured computer science professor in natural language processing at the Naval Postgraduate School.The rise of artificial intelligence has brought with it a new kind of hacker: one who can trick an AI chatbot into lying, showing its biases, or sharing offensive information. Previously, he was the Head of Machine Learning at Lyft, the Head of Machine Intelligence at Dropbox, and led AI teams and initiatives at LinkedIn. Craig Martell is the first-ever Chief Digital and AI Officer at the Department of Defense.

Martell for an off-the-cuff discussion on what’s at stake as the Department of Defense presses forward to balance agility with accountability and the role hackers play in ensuring the responsible and secure use of AI from the boardroom to the battlefield.ĭr. This is a dangerous detour in AI’s development, one that humankind can’t afford to take. On the 40th anniversary of the movie that predicted the potential role of AI in military systems, LLMs have become a sensation and increasingly, synonymous with AI. This only legitimized the plot in the 1983 classic, War Games, of the possibility of a computer making unstoppable, life-altering decisions. In 1979, NORAD was duped by a simulation that caused NORAD (North American Aerospace Defense) to believe a full-scale Soviet nuclear attack was underway. Shall we play a game? Just because a Large Language Model speaks like a human, doesn’t mean it can reason like one.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed